Arthur C. Clarke famously wrote that any sufficiently advanced technology is

indistinguishable from magic. End users feel that magic the first time a

technology comes to market: imagine the reaction of the first regular person

to see fire, use an engine, listen to a radio or send an email. For

technology developers, the sense of magic comes later as layers of abstraction

pile up. The developer of the latest social-media chat app has no need to know

how the underlying operating system, computers, Internet and voice codecs

work. Knowledge of these details is unnecessary, and the developers’

productivity is far greater by building atop these layers developed by others.

For these reasons, system developers favor creating software over custom

hardware. The software realm is abstract—unconstrained by time, power,

cost, size, heat and other realities.

Sometimes, however, these realities intrude and software running on a server

proves inefficient and slow. This can happen even in cloud data centers, where

acres of servers provide the illusion of unbounded computing power, storage

capacity and network connectivity. As a result, cloud companies find it

advantageous to offload functions from servers’ main processors in

certain circumstances. At the same time, they do not want to entirely forgo

the benefits of software implementations or force changes to their

server-processors’ software stack.

Cloud companies, therefore, are supplementing or offloading their server

processors with companion processors or SoCs dedicated to key functions. These

functions are still software-based and unobtrusively slot into the

server’s software stack. This approach is becoming common in storage

applications, such as photo-sharing services, and networking applications.

Networking applications are less obvious, being hidden behind the scenes of

consumer-facing services. Think, however, about the wizardry that takes the

search query you type in your web browser and ultimately routes you to a

server half a continent away containing the answer to your question.

Storage Offload

In the cloud, solid-state drives (SSD) provide hot storage, and a single

storage system may serve more than 100 applications concurrently owing to the

cloud’s multitenancy. This concurrency grows as host processors scale

out by integrating more CPUs. Solid-state drives, however, optimize sequential

writes like their rotating-media forebears, on the assumption it was still

2005 and drives still serve a single workload. The result is that throughput

and latency for writes to the SSD are lower and more variable. Changes on the

host to adapt to SSD’s performance characteristics may need to be

reimplemented when the company installs the next generation of SSD. SSD

generations are shorter than that for host processors and track flash memory.

It’s 2018 now and cloud companies have slipped behind

technologies’ steering wheel. Among the technologies that have surfaced

is a contribution from Microsoft Azure to the Open Compute Project for a new

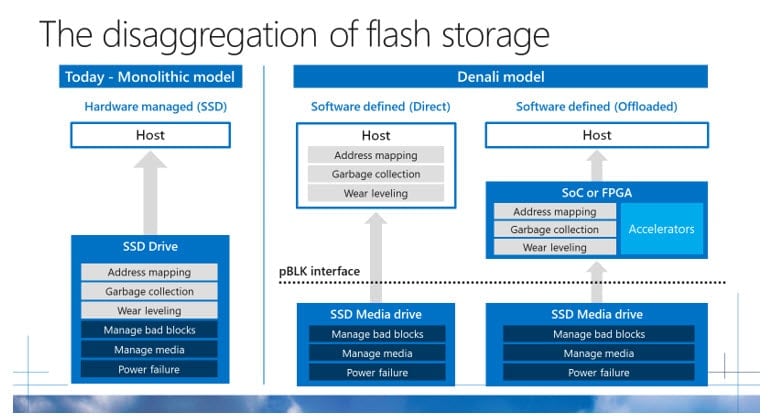

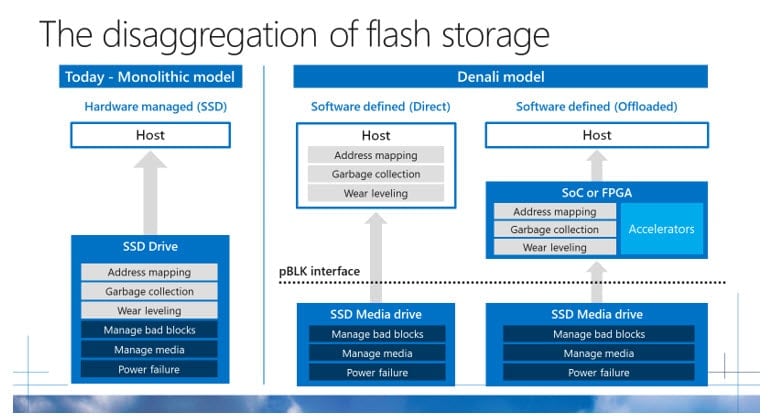

architecture for cloud SSD storage, Project Denali. Denali removes data-layout

functions from the SSD, redesigning them to address cloud workloads. They can

run on the host processor, consuming cycles otherwise used for applications.

Alternatively, they can run on an accelerator.

Microsoft’s General Manager of Azure Hardware Infrastructure, Kushagra

Vaid describes the concept in his

blog post

which includes this diagram:

Keen to help these companies usher in a better way to dish our

blogs

and

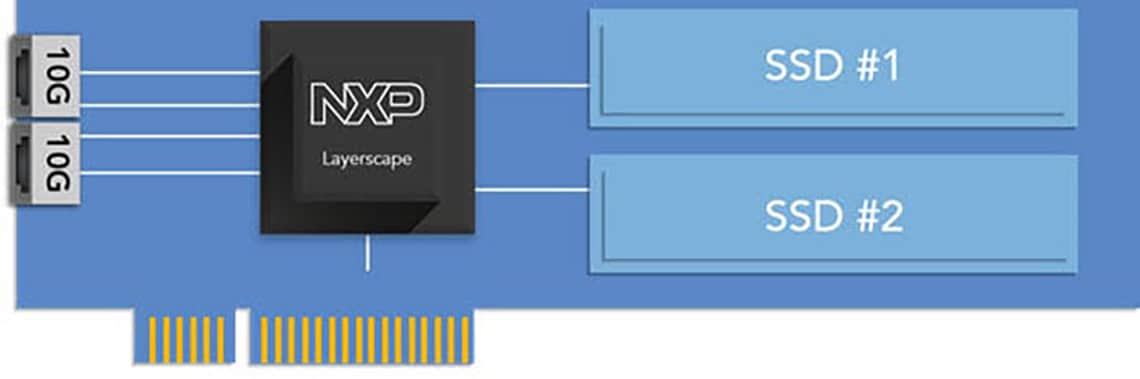

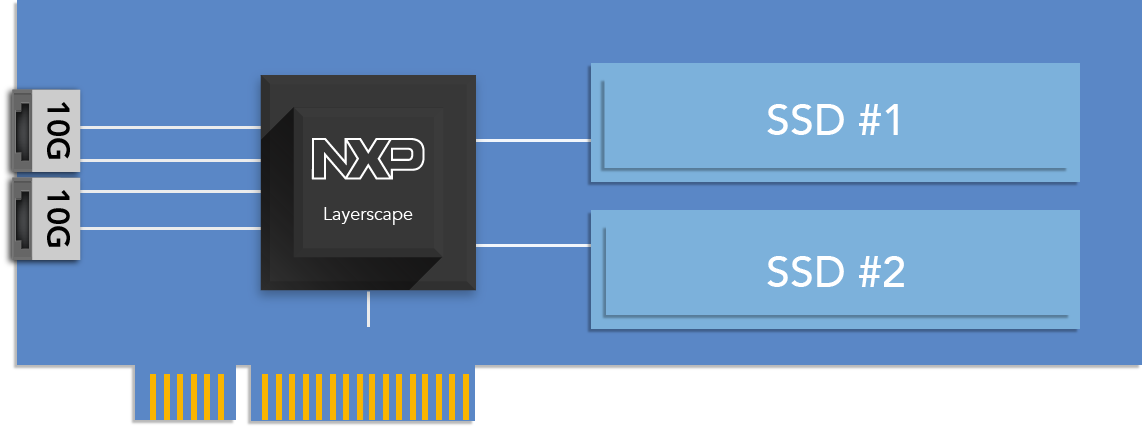

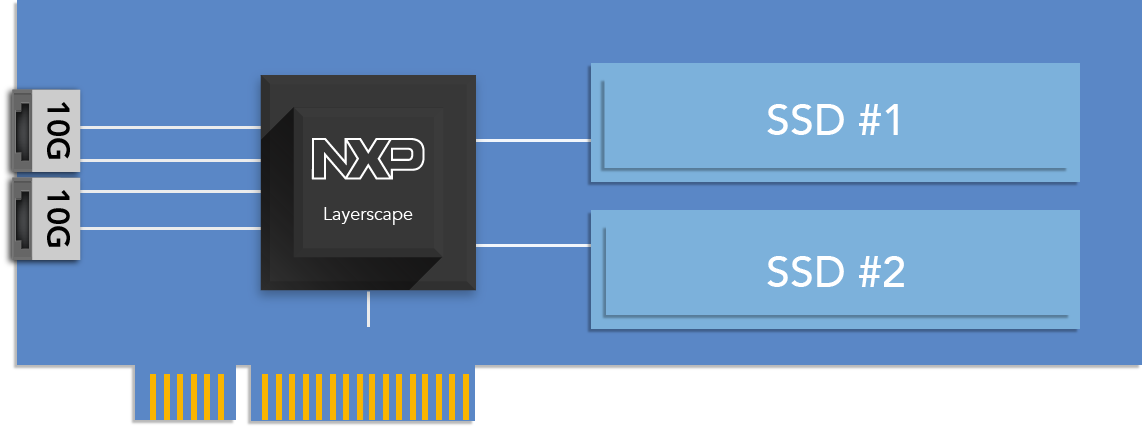

videos, NXP supports this architecture with an add-in card based on a Layerscape

processor. This design uses the multiple PCIe controllers integrated in

Layerscape

processors to connect to the host processor and M.2 SSD. The processor

performs the functions in the gray boxes in Vaid’s figure, the

functions removed from today’s SSD. As the block diagram below shows,

the card has two physical SSDs. The software on the Layerscape processor

combines their capacity and chops it up to present it as more than 100 virtual

drives to the virtual machines on the host. Owing to the hardware accelerators

Layerscape integrates, the NXP storage accelerator can also efficiently

compress and encrypt data—functions that would otherwise consume

host-processor cycles.

Storage accelerator based on a Layerscape processor.

Networking Offload

Cloud companies also turn to add-in cards to accelerate network functions.

Standard network interface cards (NICs) excel at basic Ethernet connectivity,

handling overlay protocols for a limited number of flows and offloading

stateless TCP functions from the host. Unfortunately for cloud service

providers, these NICs lack the flexibility to implement proprietary functions

or operate at a higher network layer, such as IPsec. They are also constrained

by the limited memory integrated in the NIC’s controller chip. Cloud

companies, therefore, have turned to intelligent NICs, also known as smart or

programmable NICs. As the latter name implies, these cards have both

general-purpose processing capability and dedicated network functions. The

easiest way to get this combination is to use a processor like those in the

Layerscape family, the whole purpose of which is melding general-purpose and

network-specific processing.

Layerscape processors with the NXP Data Plane Acceleration Architecture (DPAA)

can parse complex encapsulations, apply quality of service rules through

sophisticated queuing mechanisms and steer packets to the onboard CPUs or the

host processor. Off-chip memory eliminates a critical limitation of dedicated

NIC controller chips. The Layerscape SoC can also fully offload IPsec and

other security functions from the host. The saving in host CPU cycles by

offloading IPsec is significant. A 25-core server host may need to allocate

20% of its cores just to handle IPsec, which is uneconomical considering the

server processor could cost thousands of dollars. Just like the host

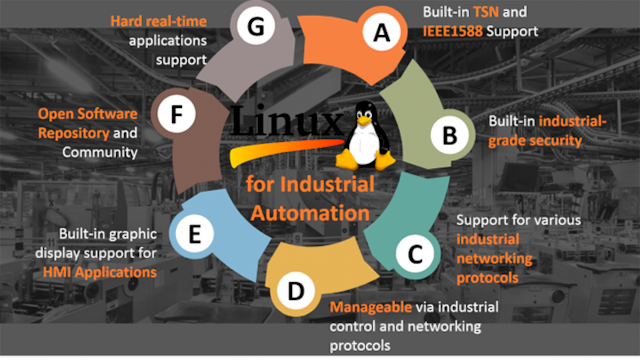

processor, Layerscape’s onboard CPUs can run Linux and offer cloud

companies the ability to easily move functions between the host CPU and the

SoC CPUs or to add their own special functions. The similarity ends there.

Integrating PCIe and Ethernet interfaces, Layerscape family members are an

economical, power-efficient and small-form factor solution to building an

iNIC NXP also supplies important enabling software and support services.

Cooler than each of the above examples is the combination of the two. Cloud

service providers can combine offloads in a single add-in card, selecting a

Layerscape processor with more CPUs and interface lanes to simultaneously

offload storage and networking functions. With that level of

integration, the cloud company could can say buh-bye to the expensive host

processor, adapting the add-in design to operate independently. In a surprise

turnabout, the Layerscape SoC, then, is the host of a storage-networking

system, playing not a supporting role in a system but the primary one. Its

lower power, better integration and selected hardware offloads make it a

better performing and more economical solution than a server host processor.

Such economics are ultimately as important as the improved efficiency and

performance gained from offloading functions to a Layerscape-based

accelerator. After all, cloud companies are big businesses and server

processors are expensive. Reducing their load frees cycles for other,

revenue-generating use. This reduction alternatively reduces the number of

machines that must be deployed, reducing cost. Like magic, SoC devices, like

Layerscape processors, are improving data centers. NXP is engaged with cloud

service providers, helping them use Layerscape SoC devices to bring their data

centers to the next level of performance and efficiency.